The Sisyphean Cycle of Hype and Fear in Medical AI

UBC Research Day · April 17, 2026

Disclaimer

Except only tangentially, I have not worked in AI + ophthalmology.

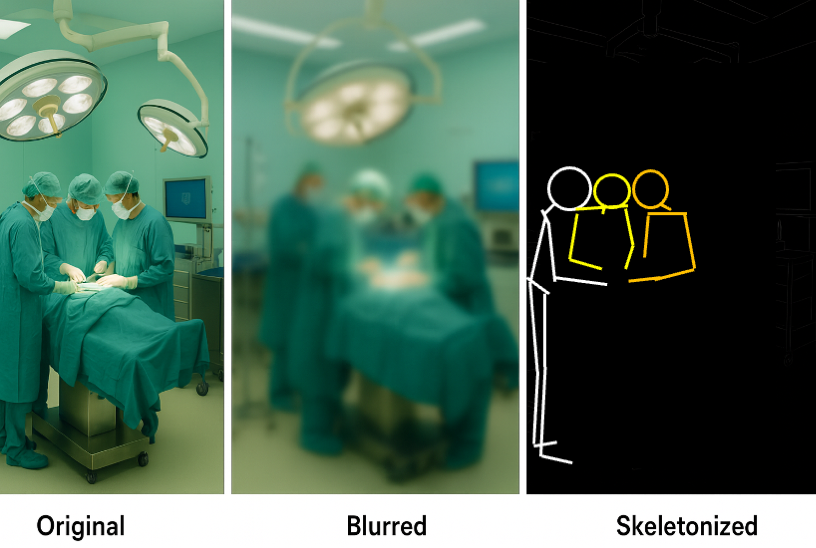

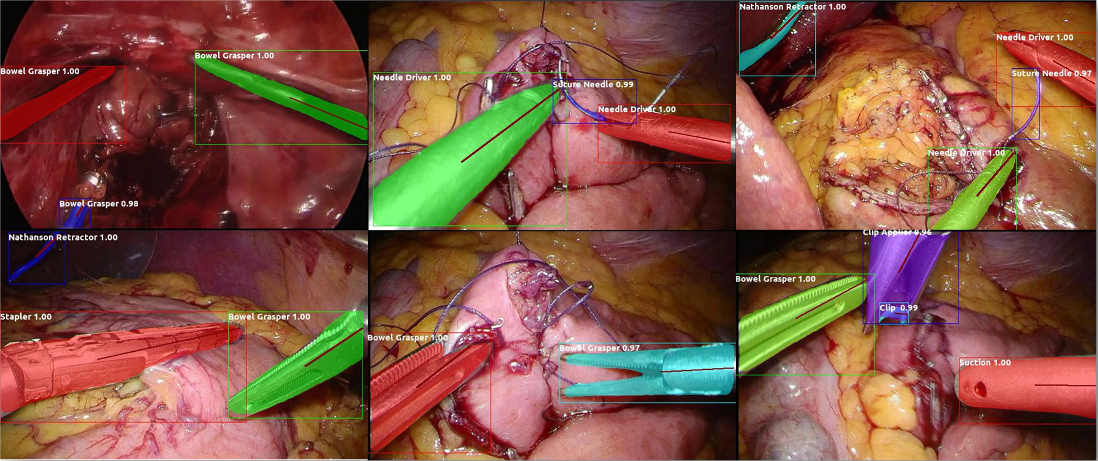

I have worked in AI + surgery, which uses similar techniques (in academia, industry, and standards) and I currently work in AI Safety, which flavours some of this talk.

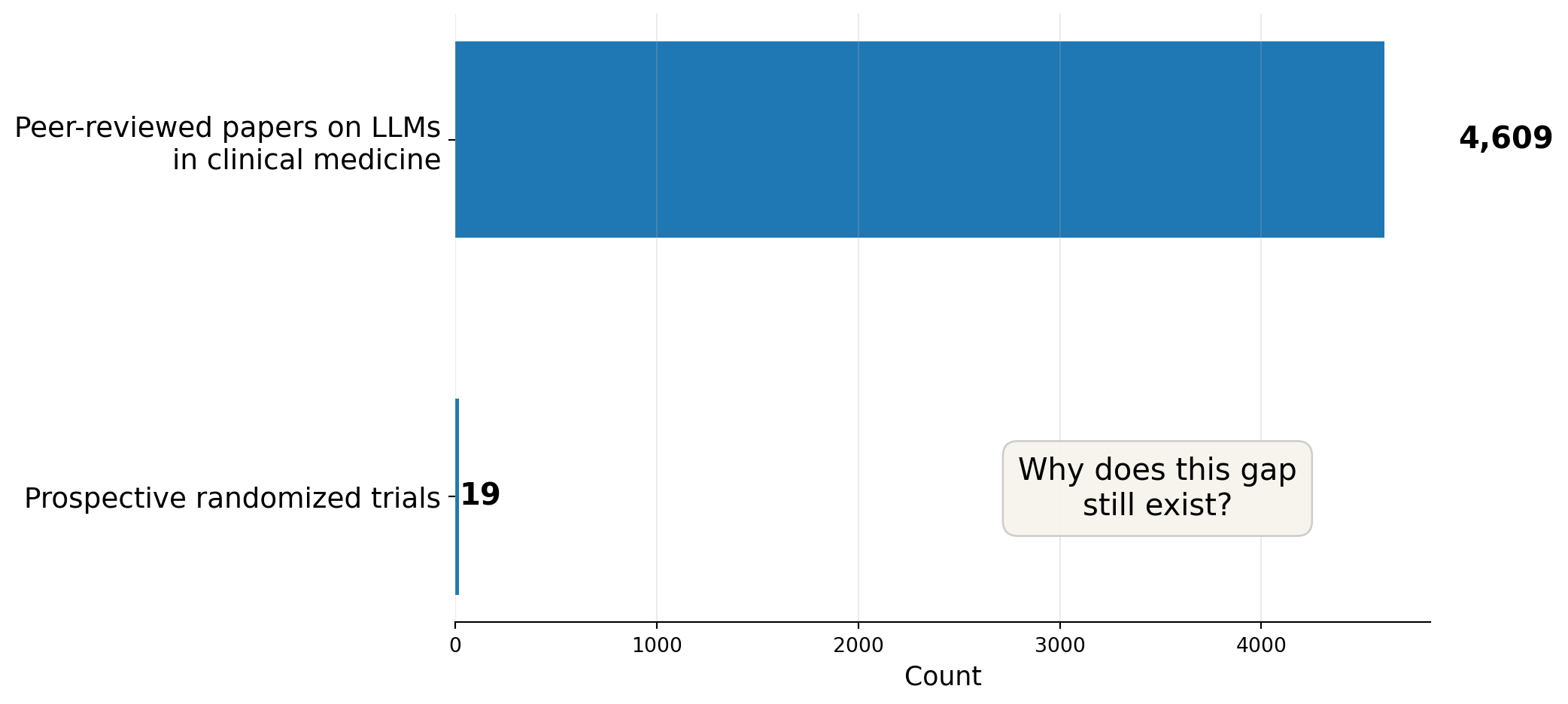

A gap worth examining

Between January 2022 and September 2025, researchers published 4,609 peer-reviewed papers on large language models in clinical medicine.

Of those, 19 were prospective randomized trials (S. F. Chen et al. 2026).

Hypothes-ish

The limiting problem in medical AI is often not the model.

It is the repeated substitution of one thing for another:

- 🔬 benchmark performance for 🏥 workflow performance

- 🎯 accuracy for 👩🏽⚕️ clinical utility

- 🚚 deployment for 👋 the end of evaluation

🤔 Claim

🎉Hype and 😱 fear are both downstream symptoms of these substitutions.

1. The hype ↔︎ fear cycle

The Sisyphean loop

--- config: look: handDrawn theme: neutral --- flowchart LR A[Compelling demo] --> B[Benchmark headlines] B --> C[Pilot deployment] C --> D[Workflow friction<br/>missed edge cases<br/>trust problems] D --> E[Backlash / fear] E --> F[Reset] F --> A style D fill:#F7F4EC,stroke:#5B534A,stroke-width:3px,color:red

Note

The point is not that the enthusiasm is irrational.

The point is that we seem to expect things to always work perfectly.

This expectation arise from a combination of ‘category mistakes’…

Four category mistakes

- The benchmark mistake

A model answers exam-style or tightly scripted questions well, so we infer it will help real people in messy settings. - The metric mistake

AUROC, accuracy, or F1 go up, so we infer patient care improved. - The target mistake

The model predicts something measurable, so we infer it predicts what matters. - The lifecycle mistake

A model clears validation, so we infer evaluation is basically complete.

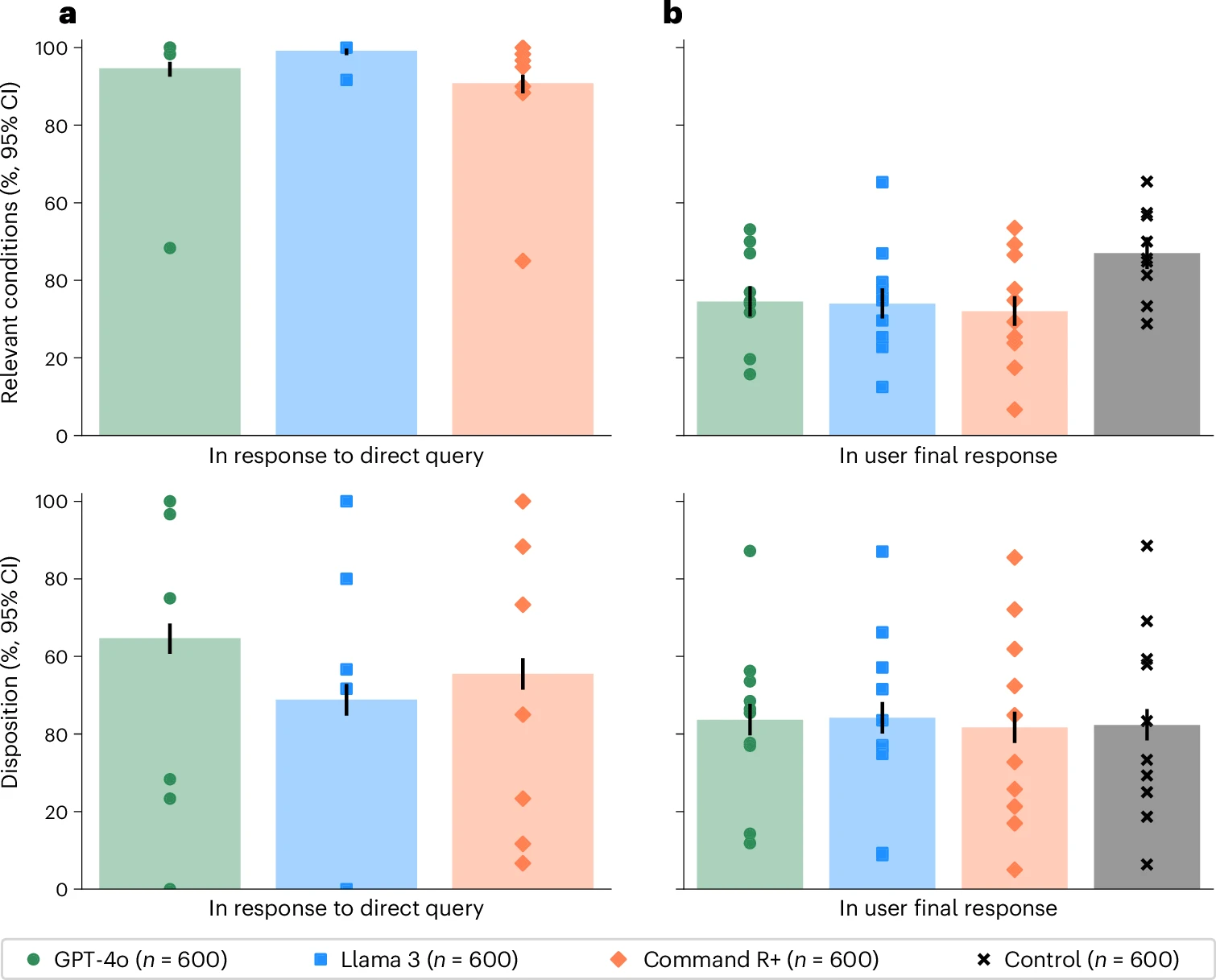

The benchmark mistake

A 2026 Nature Medicine randomized study tested whether LLMs actually help members of the public make medical decisions in realistic scenarios (Bean et al. 2026).

- Tested alone, the models looked very strong:

- 94.9% correct for condition identification

- 56.3% correct for disposition

- But people using those same models performed rather poorly.

- fewer than 34.5% identified the relevant condition

- fewer than 44.2% got the disposition right (Bean et al. 2026)

The benchmark mistake

💡 Lesson - “PEBKAC”

A model can perform well in isolation and still fail as a tool for human decision-making.

This is not a weird corner case.

Nor is it isolated to non-experts.

It is exactly what we should expect when test conditions and deployment conditions are different.

AI in Ophthalmology

A 2026 JAMA Ophthalmology “Eye on AI” theme explicitly argued that ophthalmology is one of the places where AI may be transformative, especially through image analysis and natural language processing (Liu and Bressler 2026).

AI in ophthalmology makes sense:

- visually rich data,

- specialist bottlenecks,

- screening needs,

- and increasingly digital workflows.

Tempting results \(\neq\) deployment evidence

In a 2023 eye-care study, ophthalmologists and a chatbot produced answers to 200 patient forum questions with broadly similar reviewer-rated quality,

- Reviewers distinguished human from chatbot answers with only 61% accuracy (Bernstein et al. 2023).

But it still does not answer the harder questions:

- What happens with real patient history and liability?

- What gets omitted?

- Who catches subtle harms?

- What workflow does this create in clinic?

So what counts as “good enough”?

A practical standard

For clinical adoption, we should care much more about:

- human performance with the system

- workflow effects

- downstream harms

- generalization across settings

- post-deployment monitoring

than about a single headline number.

How do we change how we evaluate AI in medicine?

2. What evidence usually misses

Accuracy \(\neq\) utility

A 2024 Lancet Digital Health scoping review found 86 RCTs of AI in clinical practice; 70 of 86 (81%) reported positive primary endpoints (Han et al. 2024).

That is genuinely encouraging but the same review flags reasons for caution:

- most trials were single-centre

- race or ethnicity was reported in only 22 of 86 trials

- operational efficiency was inconsistently measured and reported

- findings may reflect publication bias as much as clinical signal (Han et al. 2024)

Positive RCTs are good news.

They are not yet proof that results will hold across sites, populations, and workflows.

Negative results are not (necessarily) bad news.

50% accuracy, e.g., feels like a failing grade, but does it beat human performance? Does it still allow us to prioritize patient care if it will safely save time?

📊 A better scorecard

For deployment, a useful scorecard should usually include multiple families of outcomes:

- discrimination

sensitivity, specificity, AUROC, F1 - calibration

do predicted risks mean what they seem to mean? - workflow

time saved, interruptions, handoff burden, override rate - equity

subgroup performance, gradability, access effects - clinical impact

did decisions improve? did outcomes improve?

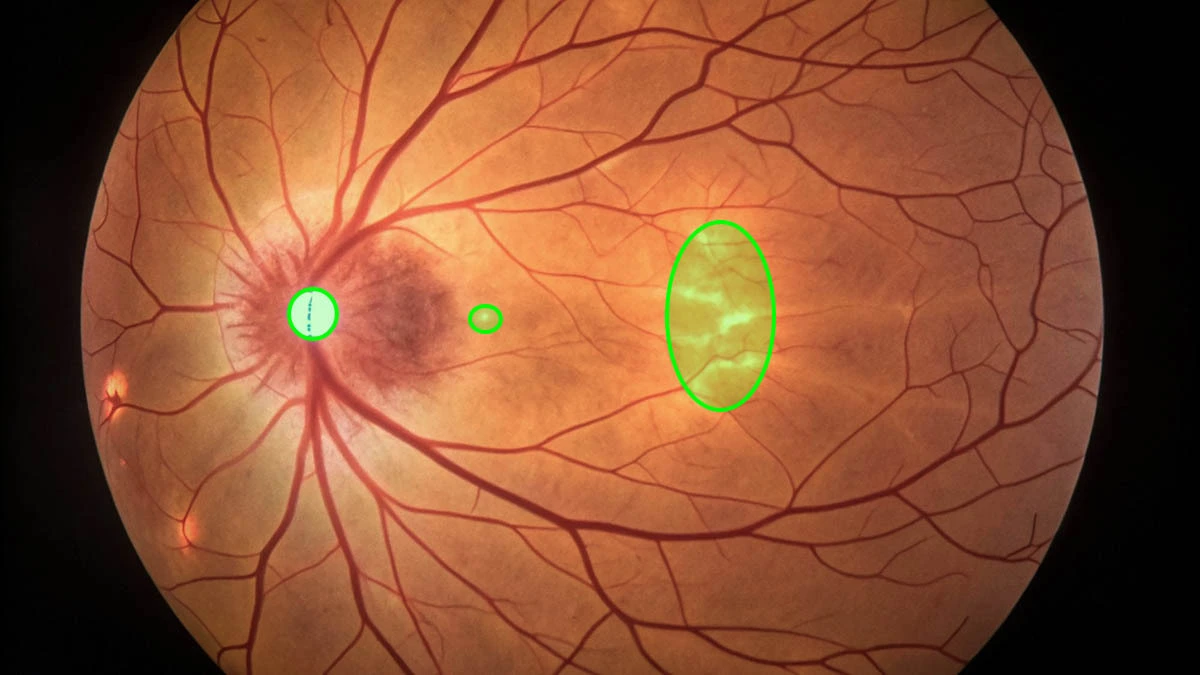

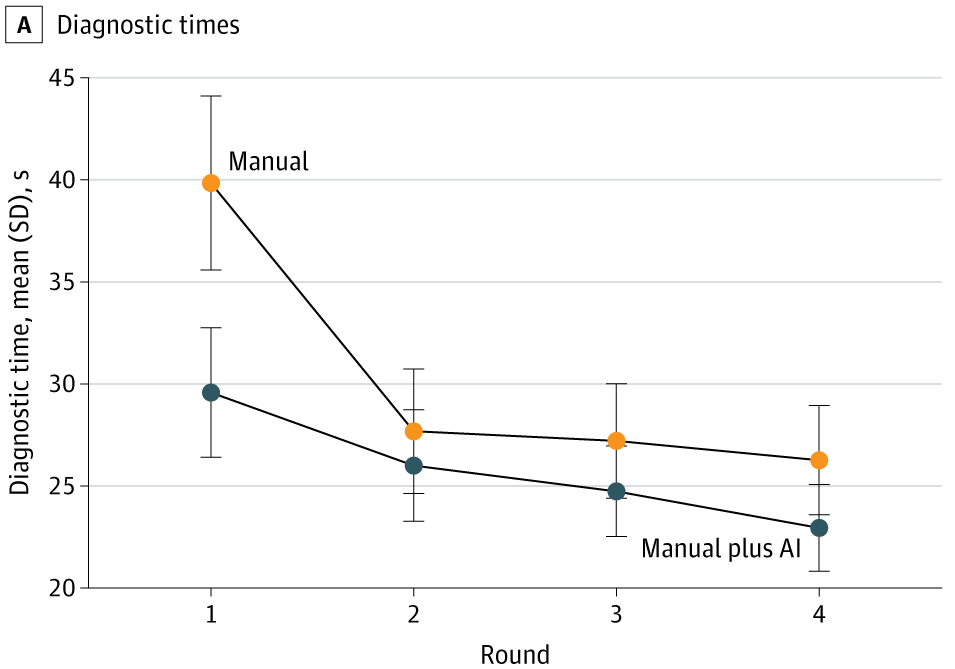

A recent ophthalmology example

A 2025 JAMA Network Open study on age-related macular degeneration built an AI-assisted workflow and evaluated clinicians across four rounds, alternating manual diagnosis and diagnosis with AI assistance (Q. Chen et al. 2025).

Findings (not just ‘accuracy’, not just once):

- AI assistance improved accuracy for 23 of 24 clinicians

- mean F1 increased from 37.71 to 45.52

- AI assistance saved roughly 10 seconds per patient initially

- after further model development, external performance improved

- but clinician + AI did not always outperform AI alone (Q. Chen et al. 2025)

A useful evidence ladder for medical AI

From weakest to strongest:

- benchmark or exam-style performance

- retrospective validation

- temporal or external validation

- human-AI comparative workflow study

- silent prospective evaluation

- live clinical trial or monitored deployment

Most arguments in public discourse are made as if stage 1 or 2 already implies stage 6.

Pause

A moment with which to sit 🪑

Think of one AI system you have recently heard about, been pitched, or seen used in a clinical setting.

Which rung of that ladder does the evidence for it occupy?

⬇️ Top-down or bottom-up ⬆️?

Do you feel that this AI system is being forced on you, or did it originate organically?

The target mistake

Note

This is a classic misalignment problem.

A widely used health-care algorithm looked “accurate”, but it predicted future cost, not future health need.

- Because cost reflects access and spending patterns, not just illness burden, Black patients were systematically underrated for extra care (Obermeyer et al. 2019).

Fixing the target would have increased the proportion of Black patients flagged for extra help from 17.7% to 46.5% (Obermeyer et al. 2019).

The target mistake

Translation

🧮 The model may be optimizing exactly what you asked for.

🫵 That does not mean you asked for the right thing.

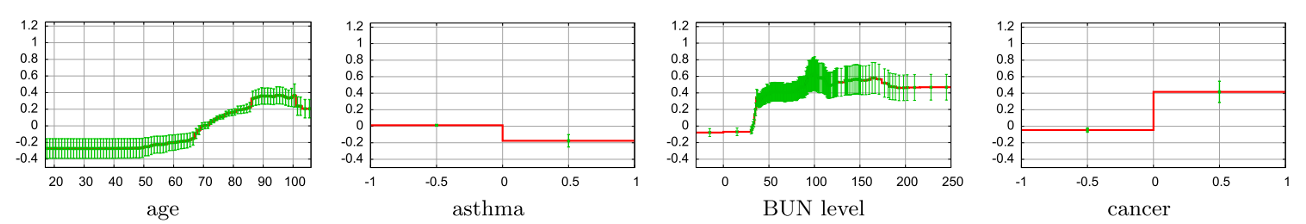

The intervention-history mistake

Note

Another canonical example: the pneumonia risk model of Caruana et al. (2015).

The model appeared to learn that asthma lowered pneumonia mortality risk.

What the model had learned was a channel effect: patients with asthma and pneumonia were more likely to be treated aggressively, including ICU admission.

So the model captured part of the care pathway, not just the disease process.

Bias is not one thing

When people say “bias” in medical AI, they often compress very different problems into one word.

At least four distinct layers matter:

- representation bias

who is in the data? - label bias

what exactly do labels encode? - deployment bias

how does the system change behaviour? - adoption bias

who gets access to the tool, and under what conditions?

Ophthalmology-specific reminder

In ophthalmology, bias and failure can arise before pathology interpretation even begins.

Examples:

- nonmydriatic gradability

- camera and acquisition differences

- site-specific workflow

- population and prevalence shifts

- who gets imaged at all

- whether clinicians actually trust or act on the output

This is one reason autonomous diabetic-retinopathy systems require not just approval but thoughtful implementation (Abràmoff et al. 2018; Teng et al. 2026).

3. From hype and fear to action

The cost of not adopting is also real

😱 Fear that overgeneralizes from bad examples carries its own harms:

- diabetic retinopathy screening could have reached populations with no specialist access

- ambient documentation could have reduced burden on overstretched clinicians

- risk stratification could have surfaced patients who would otherwise be missed

The symmetric categorical error

🎉 Hype overgeneralizes from good examples.

😱 Fear overgeneralizes from bad ones.

Both collapse different interventions into a single moral object called “AI”.

A simple risk-based view

🟢 Lower-risk uses

- draft notes

- summarize long records

- retrieve policies or guidelines

- suggest patient-friendly wording

🟡 Medium-risk uses

- prioritize review

- support image interpretation

- suggest differential or referral urgency

🔴 Higher-risk uses

- autonomous diagnosis

- patient-facing triage or advice

- treatment recommendation

- denial, rationing, or eligibility decisions

What we should ask of any new system

- What decision is this system trying to improve?

- What is the real comparator?

junior clinician? specialist? current workflow? no support? - Which metrics matter in practice?

not just AUROC — also calibration, throughput, overrides, subgroup performance. - What happens out-of-distribution?

- What is the plan for monitoring after launch?

For 🧑⚕️ clinicians

Before trusting a model, paper, or vendor pitch, ask:

- What was the model actually trained to predict?

- How close is the study setting to my workflow?

- Is this evidence about 🤖 the model, or about 🧑🤝🧑 people using the model?

- What gets 👍 better besides the number in the abstract?

- What new 👎 failure modes does this create?

For 🧑🔬 researchers

The next wave of medical AI papers should spend less energy on:

- squeezing out another small benchmark gain,

- comparing one foundation model to another on synthetic cases,

and more energy on:

- multisite prospective studies,

- human-AI interaction,

- silent trials,

- subgroup consequences,

- workflow redesign,

- and what happens six months after deployment (Wiens et al. 2019; Tikhomirov et al. 2026).

For 🏥 institutions

Institutions should treat AI less like a gadget and more like a clinical intervention plus infrastructure decision.

That means:

- procurement scrutiny

- local validation 👈

- audit trails

- consent and communication

- clinician review

- decommissioning plans

- governance after launch, not just before it

Our practical reframing

🧠 Think

Not

“Is AI ready for medicine?”

But this

“Which uses of AI are justified, for whom, under what evidence, and with what continuing accountability?”

4. Sisyphus at rest

The cycle can break

The cycle is not inevitable.

The macular degeneration workflow study by Q. Chen et al. (2025) is a useful model.

- it measured human performance with and without AI, across multiple rounds, with attention to time, calibration, and external validation

Note

That is not a study about whether the model is impressive.

It is a study about whether the system — the human plus the model — improves care.

What a responsible deployment looks like

The patient trajectory (pun intended) that avoids the cycle tends to share a structure:

- Narrowly defined task with a real comparator

- Silent prospective evaluation before any care changes

- Local validation on the population that will actually use it

- Monitored rollout with audit trails and override tracking

- Pre-committed criteria for decommissioning

Note

This is not slower than the Sisyphean rush to the peak.

It is faster — because it does not end in a backlash that resets the clock.

Suggestions

Khattak et al. (2025) provide an MLHOps checklist for clinical ML to be deployable, monitorable, and maintainable by:

- preparing the data

- engineering the pipeline

- deploying with the workflow in mind

- monitoring for drift

- updating deliberately

- governing for bias, privacy, and trust

Choose local, free-range, organic AI!

Final thought

The cycle becomes Sisyphean when we forget, again and again through our haste, to build in (and validate!) our ⛔️safeguards⛔️.

These safeguards represent choices about: what counts as evidence, what questions get asked before deployment, and how we handoff responsibilities.

🤔 Stop and think

Don’t be “anti-AI” or “pro-AI”.

Be more mindful of the “big picture”

Thank you.

References

UBC Research Day 2026 · Keynote Lecture